1. Setting up Anyway

Anyway (anyd) must be running on every GPU machine you want to manage.

Start Anyway

anyd [--port=9100]--port flag. Once running, it will accept

commands from the Control Manager.

2. Setting up Anyway Control Manager

Anyway Control Manager (any-cm) can run on a machine with or without GPU.

It serves a browser-based User Interface that you use to manage your machine cluster.

Start the Control Manager

any-cm [--port=3000]http://<control-manager-ip>:3000.

The Control Manager (any-cm) communicates with each Anyway process (anyd) running your machines.

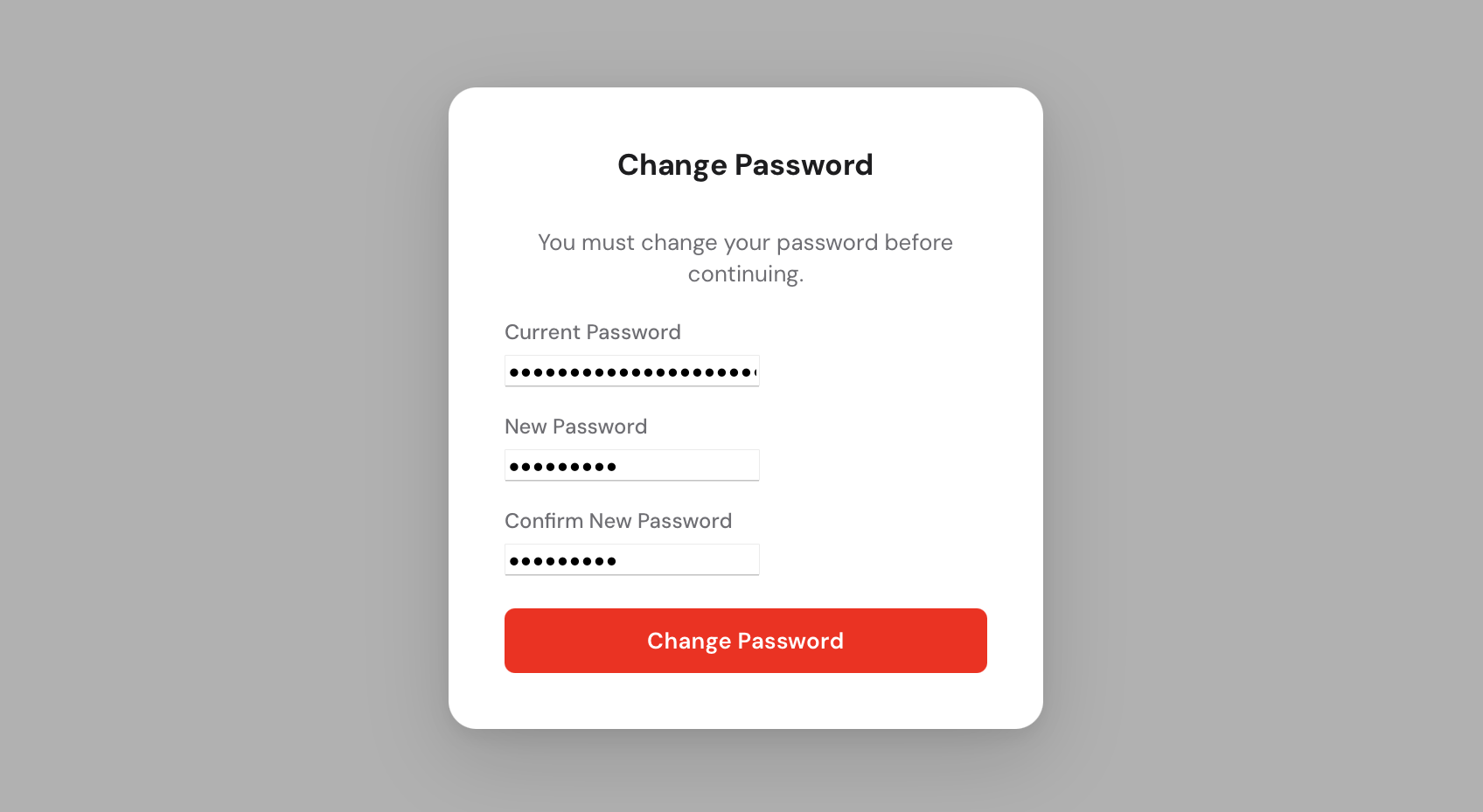

admin and a random admin password is

generated and printed to the console. Copy it before closing the terminal, you will need it to log in. You will be prompted to change it immediately after your first login.

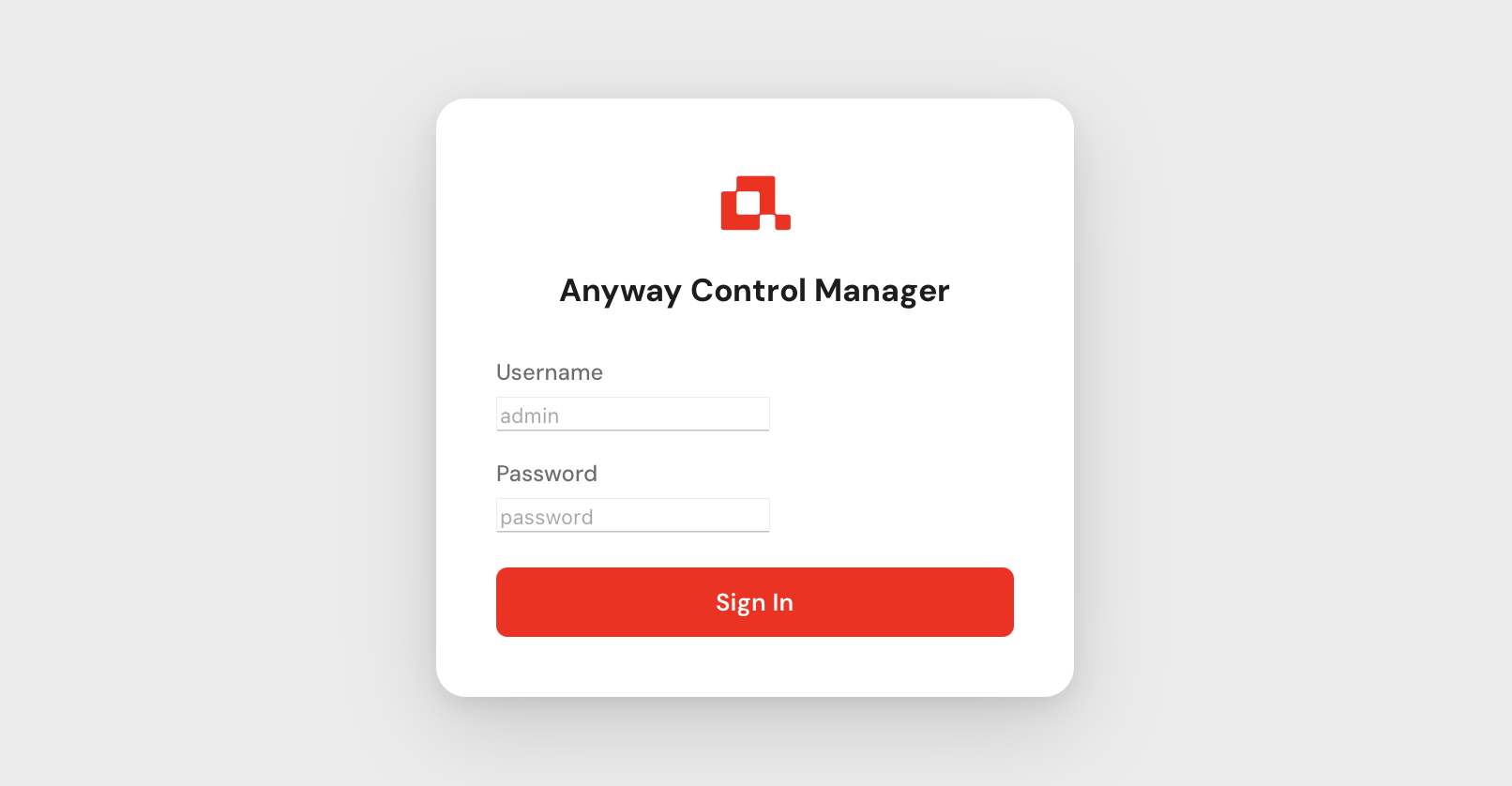

3. Logging in

Open your browser and navigate to http://<control-manager-ip>:3000.

You will be greeted by the login screen.

Use admin as the username and the password that was printed to the console

when you first started any-cm. After logging in you will be asked to set a new

permanent password.

You have now access to the controle manager.

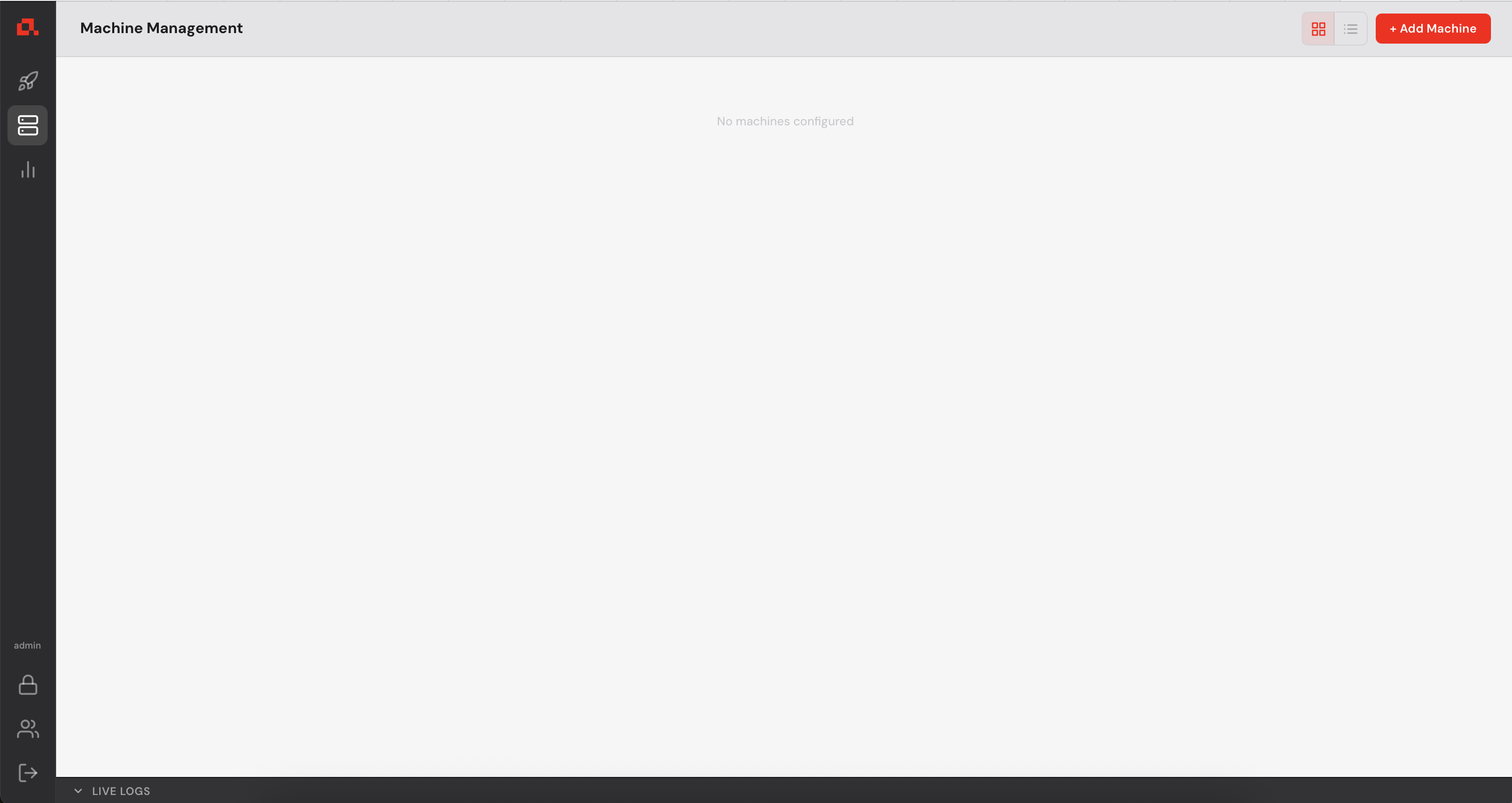

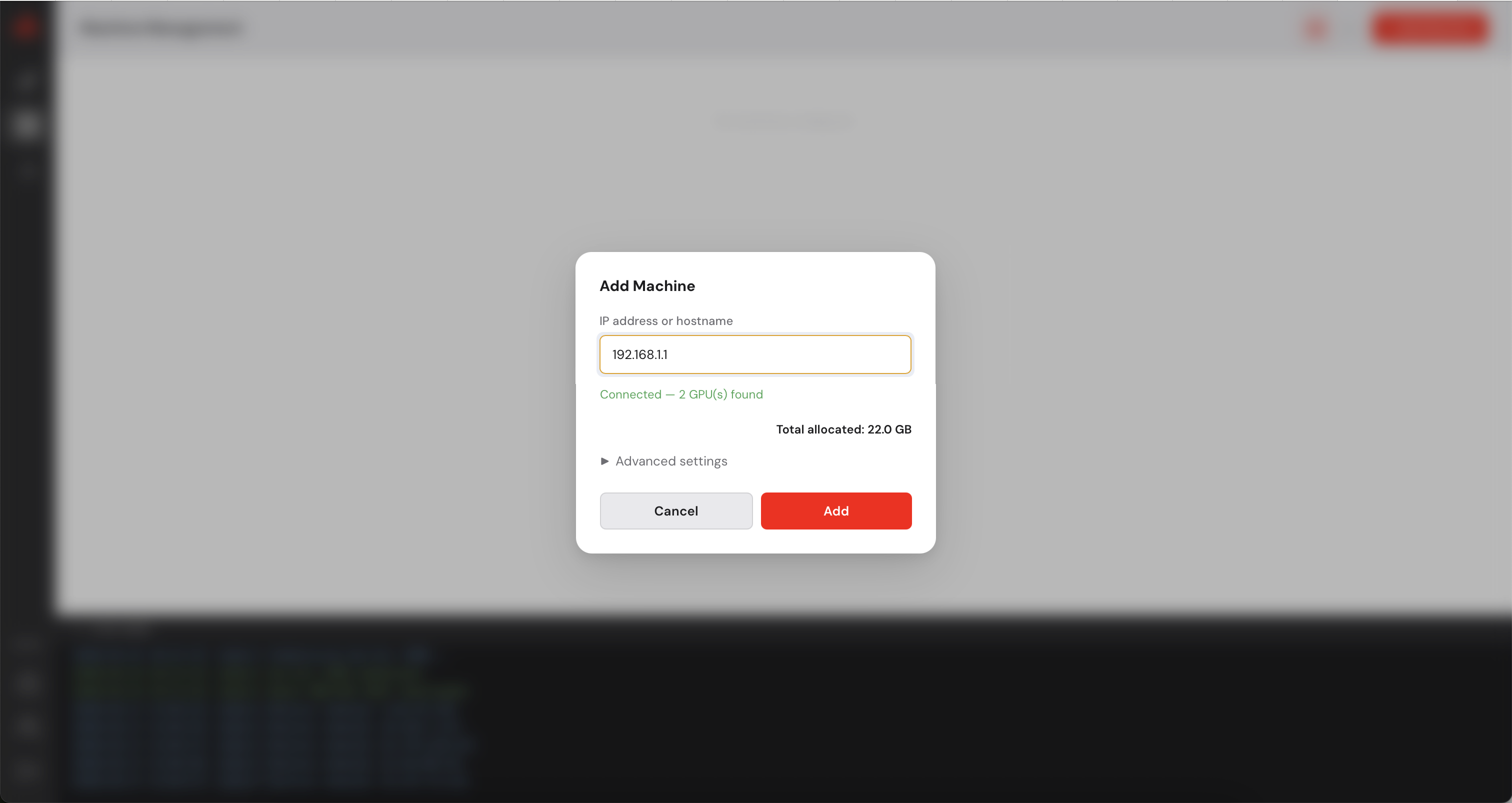

4. Adding Machines

Before deploying any model, you need to register your GPU machines with the Control Manager.

Each machine must be running anyd (see step 1).

in the top right corner.

in the top right corner.

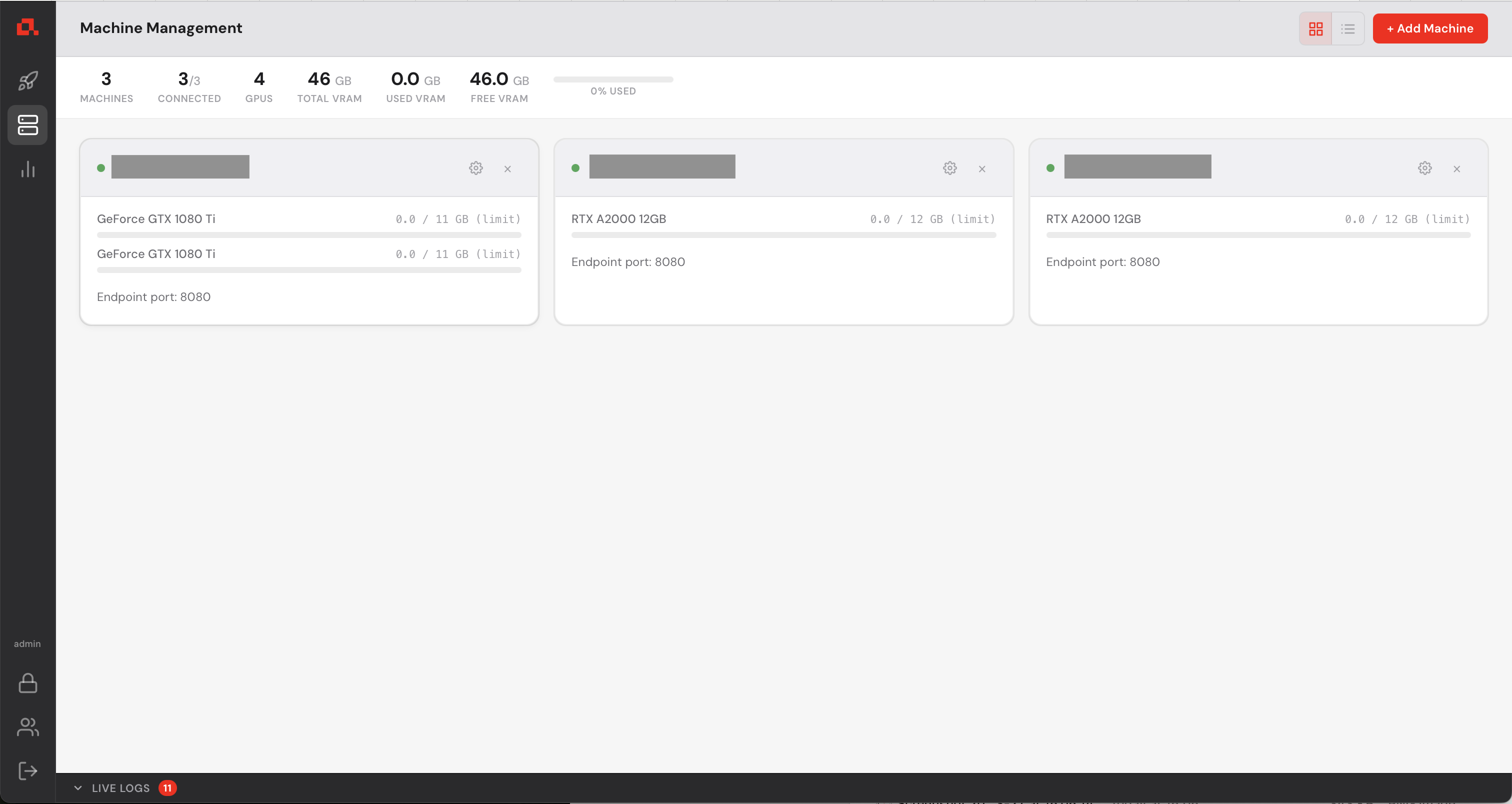

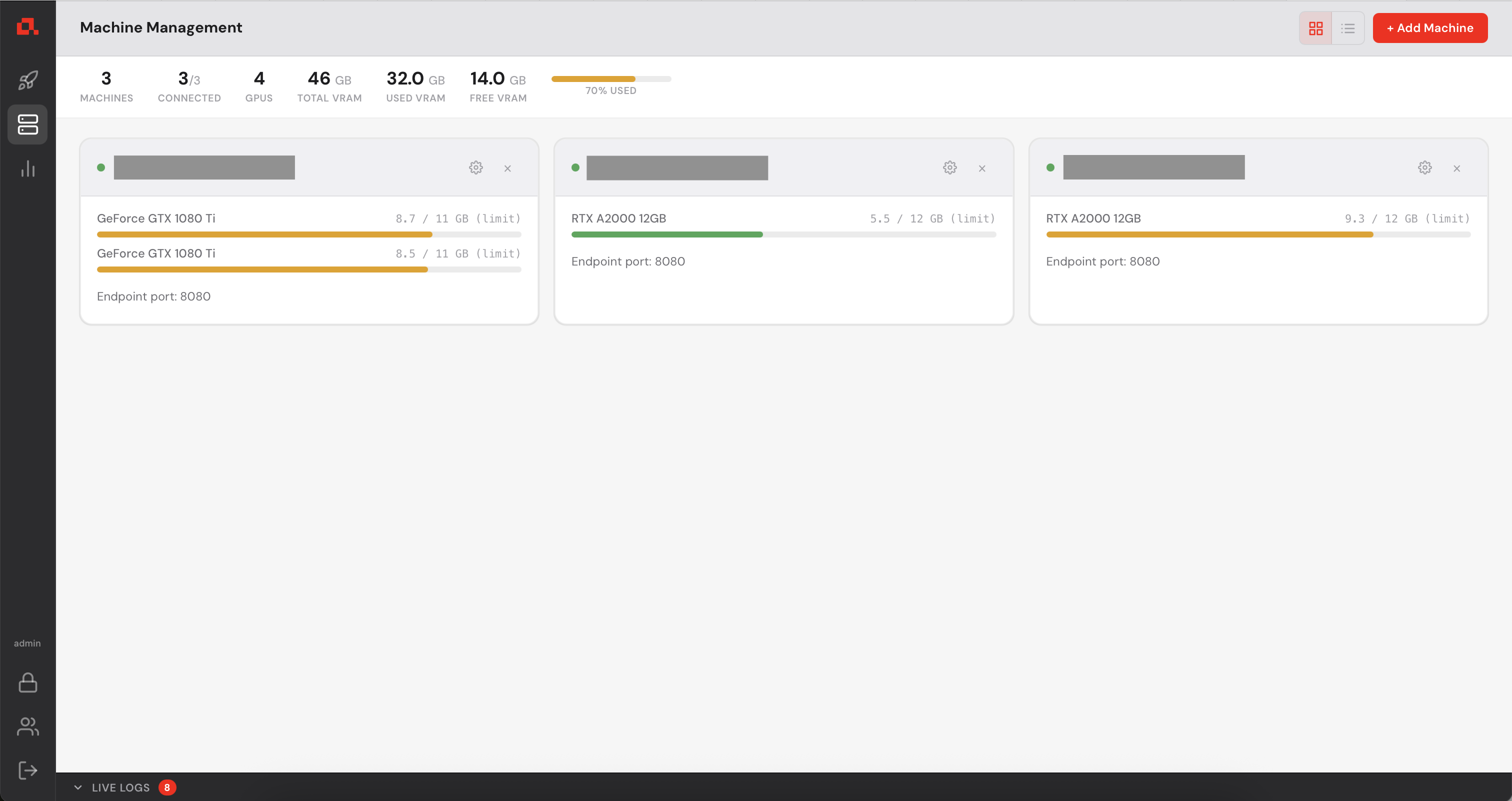

After adding your machines, they appear on the Machine view as follows (in the following screenshot, the IP addresses have been anonymized).

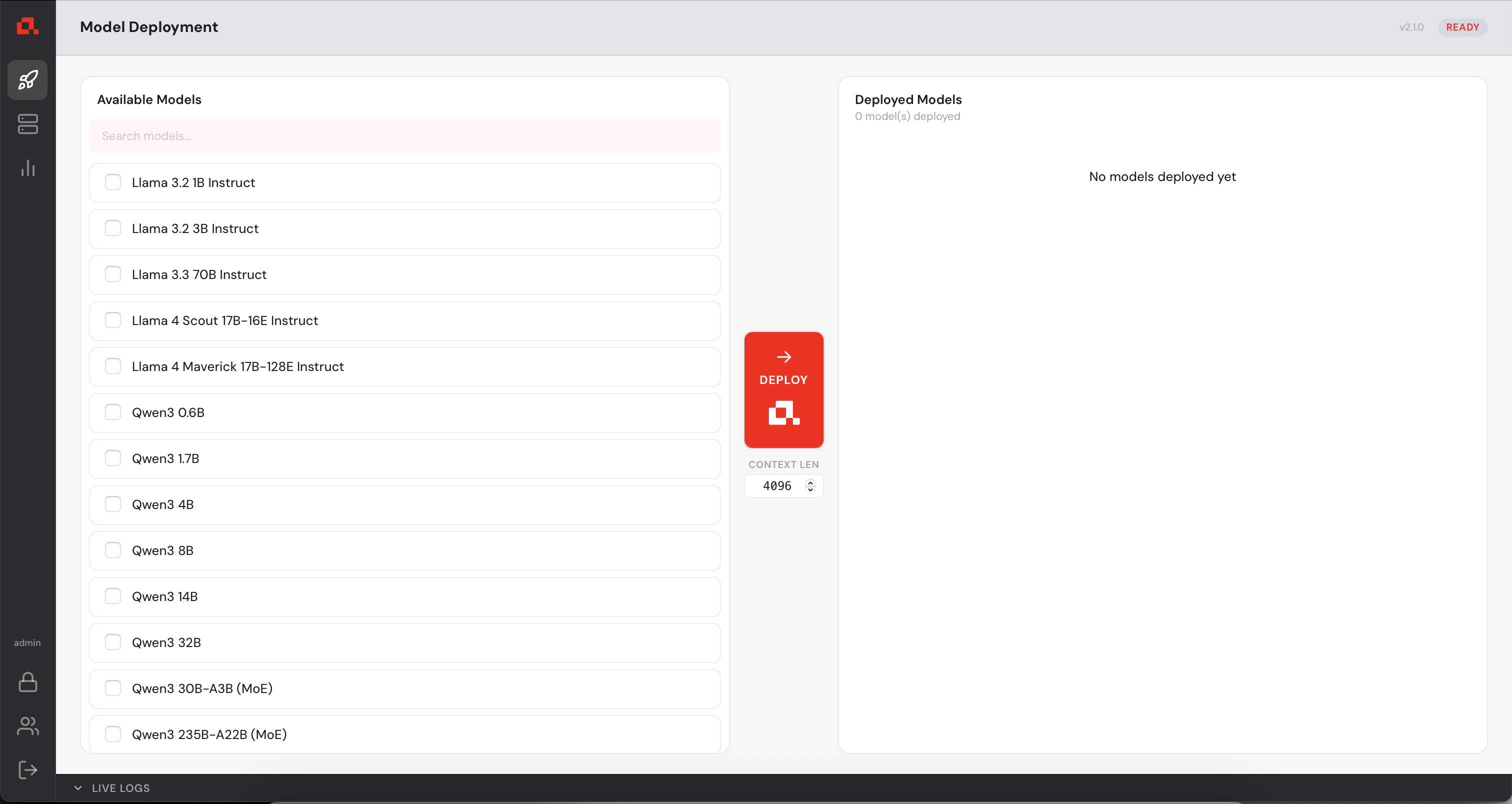

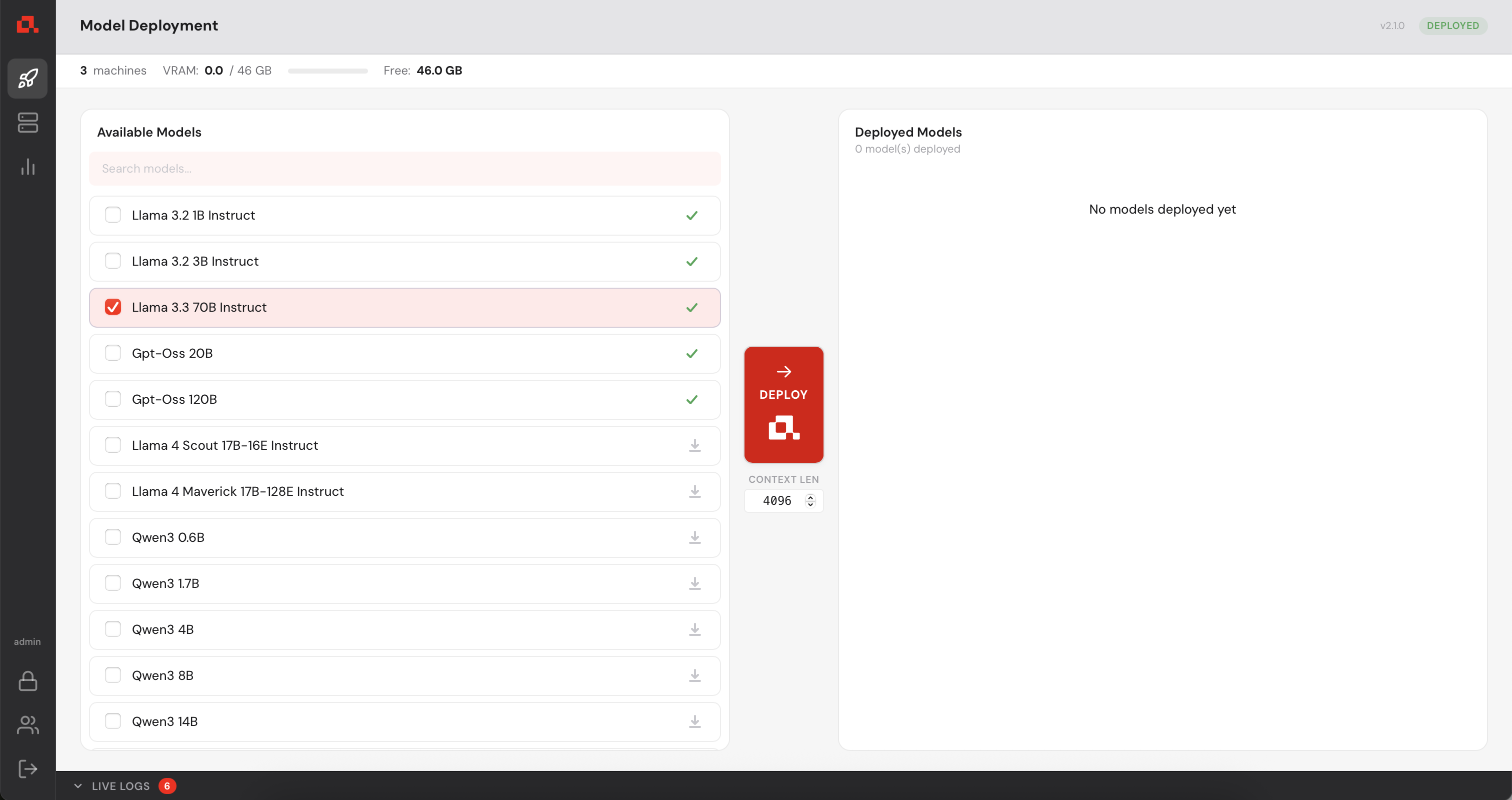

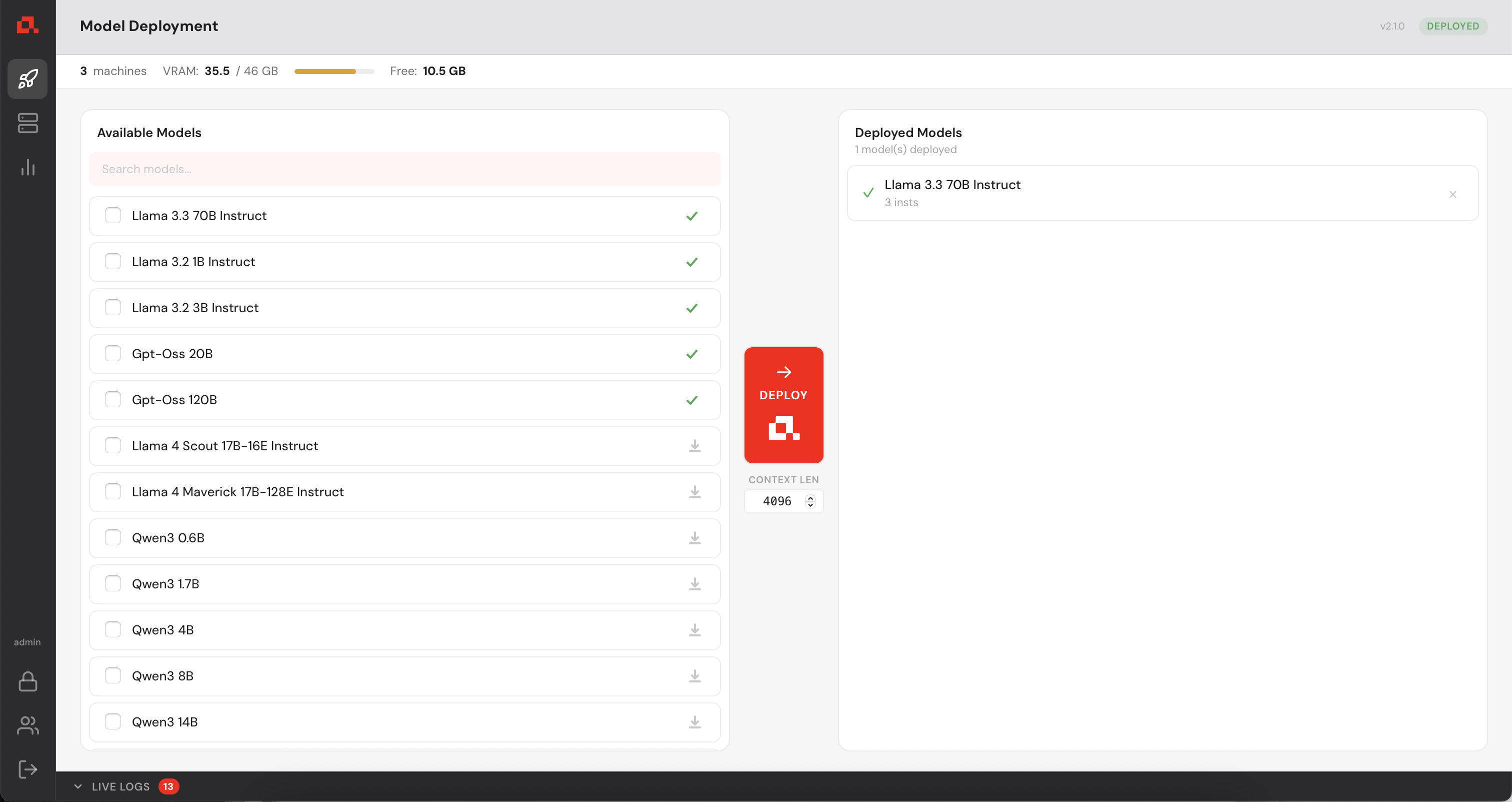

5. Deploying a Model

.

.

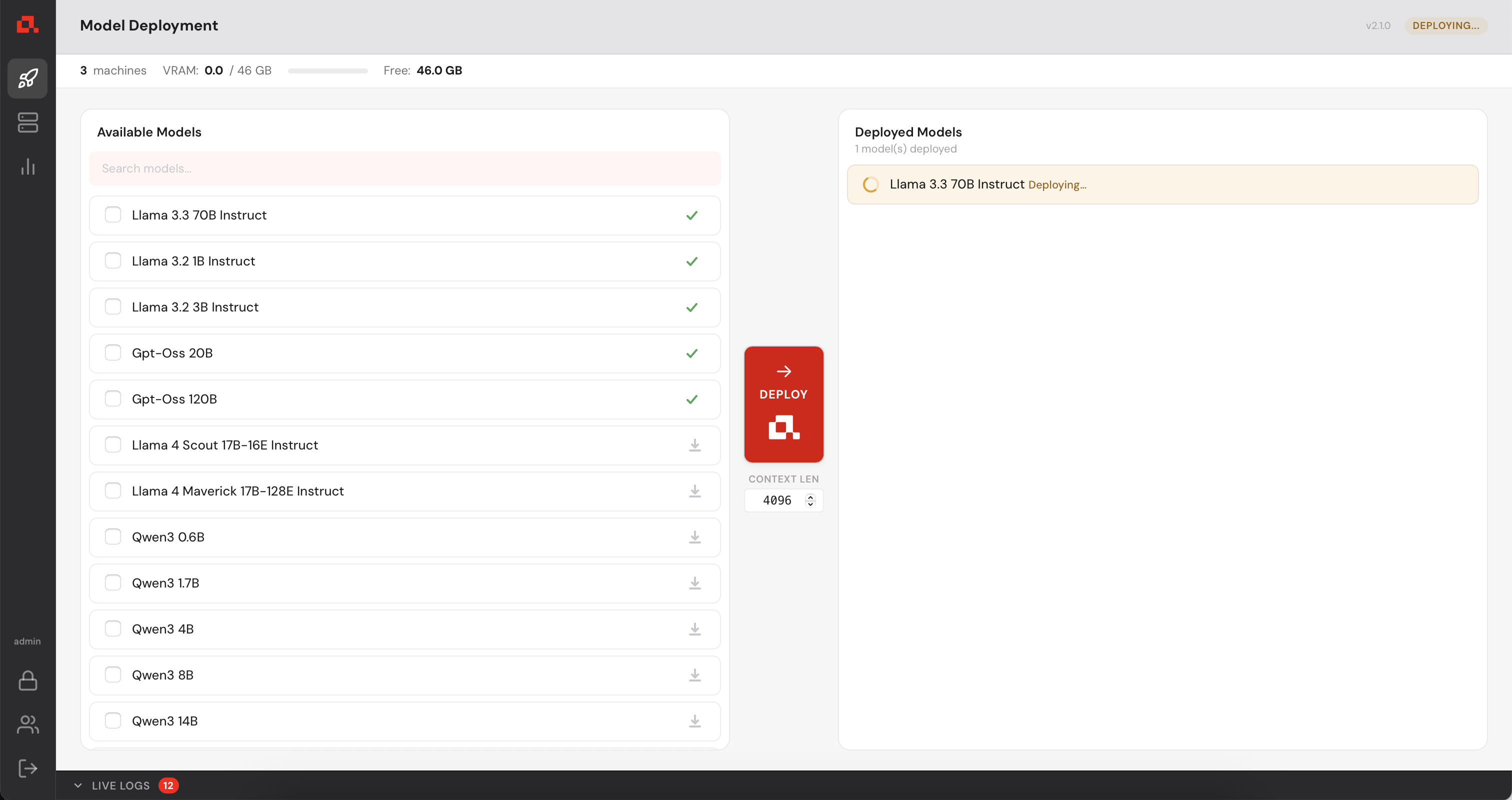

The model is deploying....

Then, deployed.

In the Machine Panel, you can see the model being distributed over the machine.

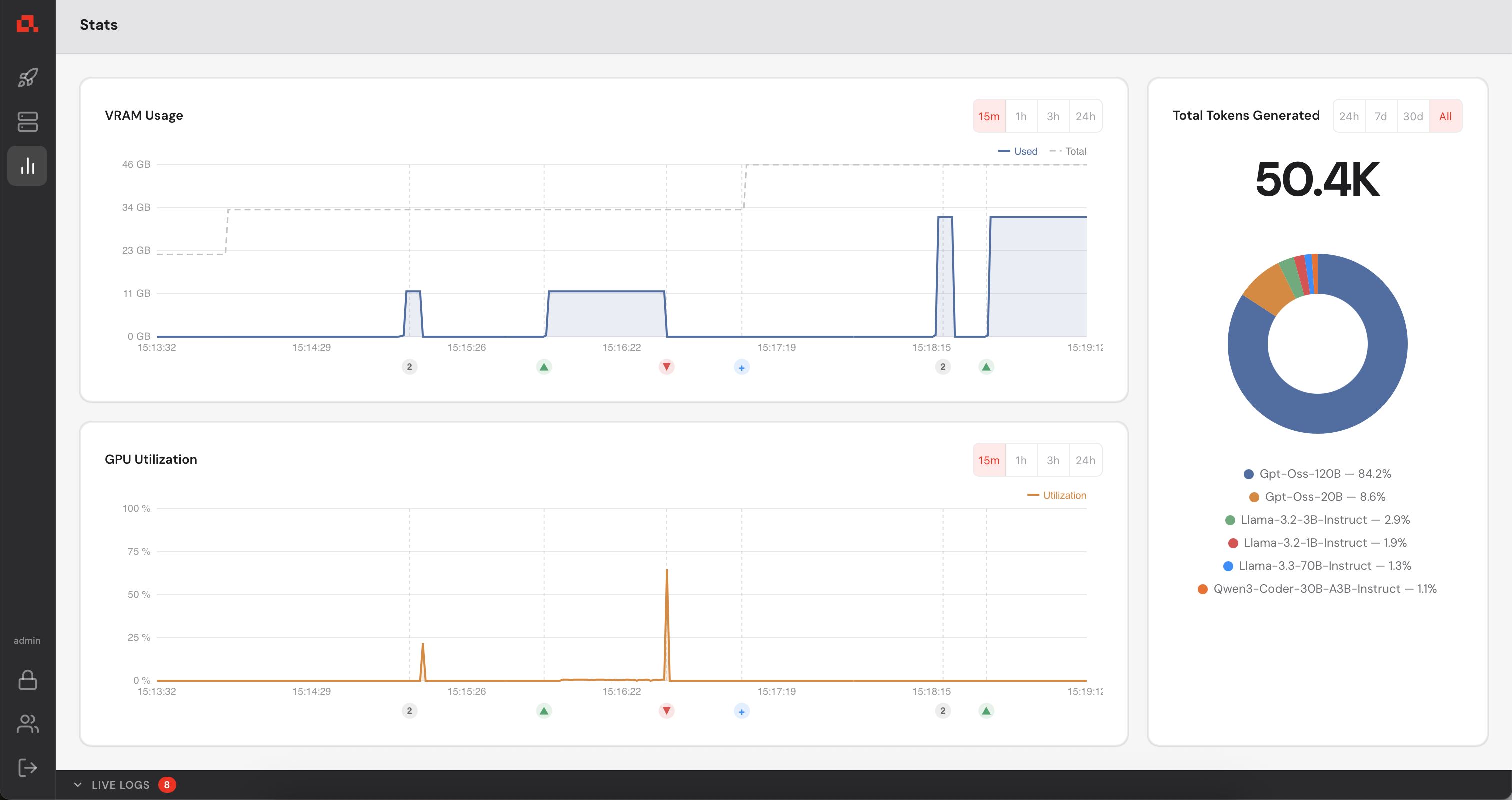

6. Viewing Statistics

Your control manager continuously collects GPU metrics from every registered machines (updated every second). You can view live and historical data from the Statistics panel.

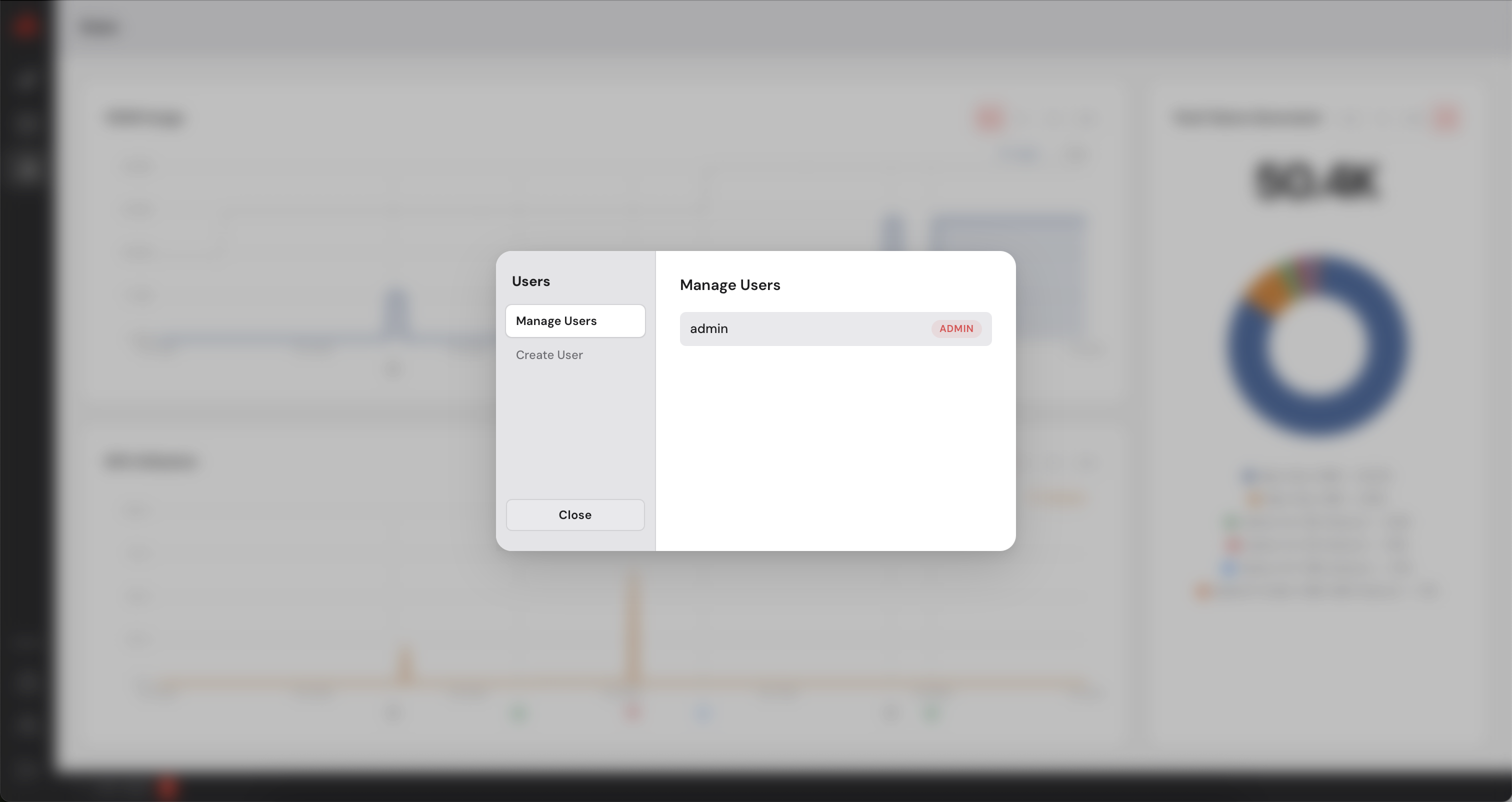

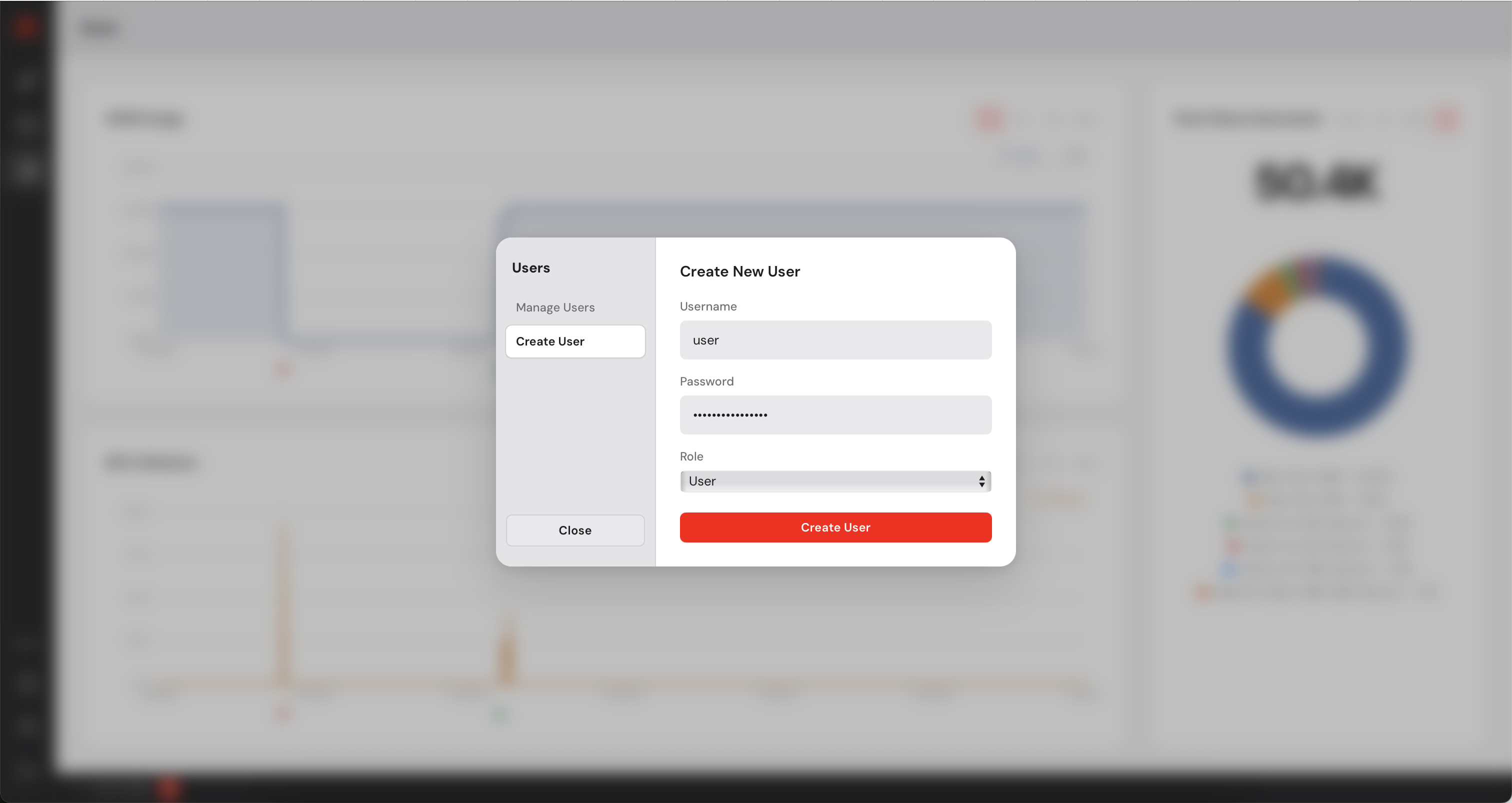

7. Managing Users

The Control Manager has a built-in user management system. As an administrator you can create additional accounts for colleagues who need access to the dashboard. You can create users with admin priviledges or simple users who will not be able to add or remove machines. Simple users can simply deploy models on the available machines.

Need Help?

Email: contact@anyway.dev

Documentation: Additional resources and advanced configuration options available upon request.